Project Keys are downloadable credential files that bundle one or more of the following into a singleDocumentation Index

Fetch the complete documentation index at: https://docs.streamkap.com/llms.txt

Use this file to discover all available pages before exploring further.

.json artifact:

- API access — a Client ID and Secret for the REST API, CLI, and Terraform

- Kafka access — SASL credentials and proxy endpoints for direct topic consumption

- MCP tool scoping — controls which tools an AI agent can use via the MCP Server

Prerequisites

- You need the Project Keys write permission (included in the Admin and Data Admin roles)

- Kafka access requires an AWS-hosted project with a dedicated namespace — if your project doesn’t meet this, the Kafka option will be disabled with a tooltip explaining why

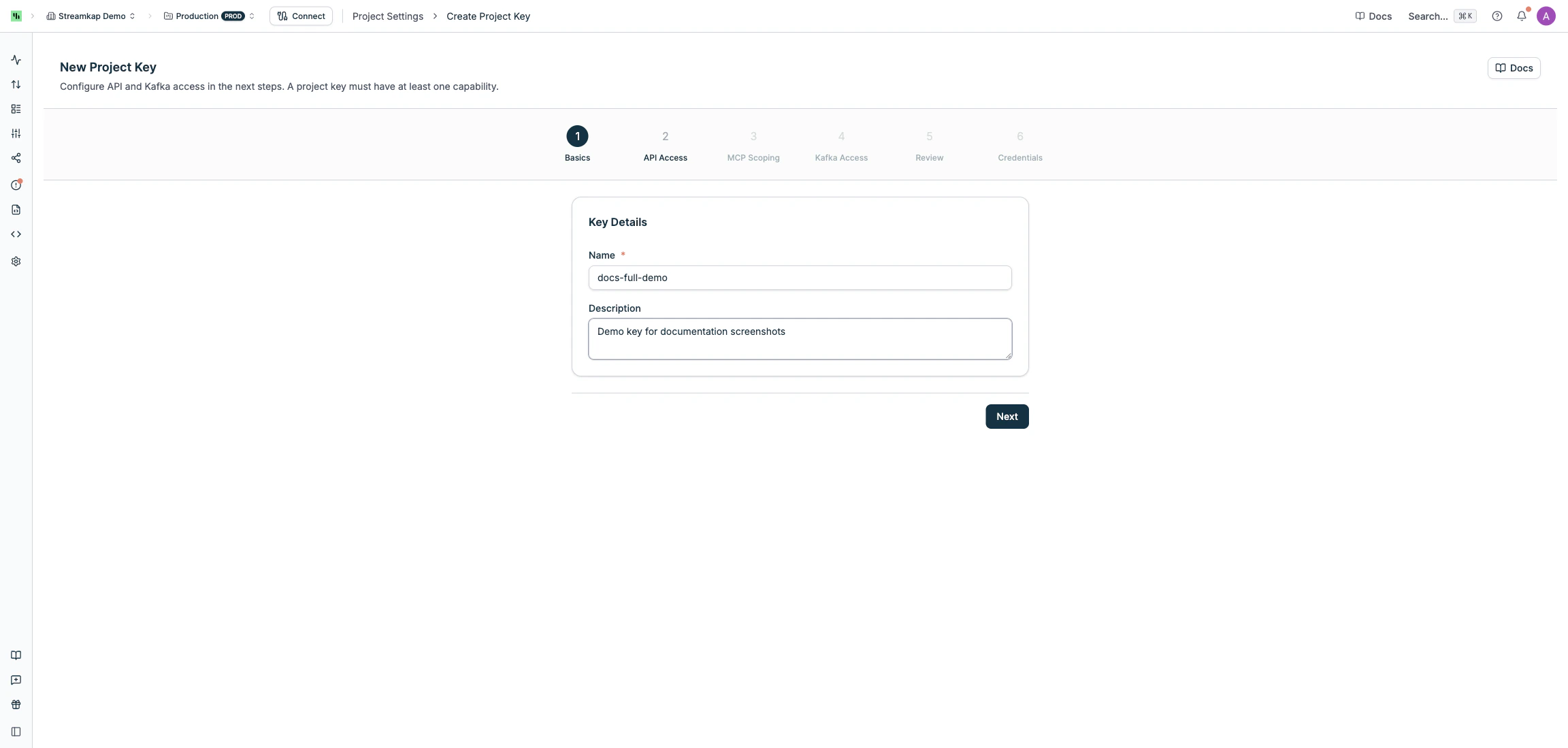

Create a Project Key

Navigate to Project Settings > Project Keys and click Create Project Key. The wizard walks you through six steps.Step 1: Basics

Enter a Name (required, up to 100 characters) and an optional Description for the key.

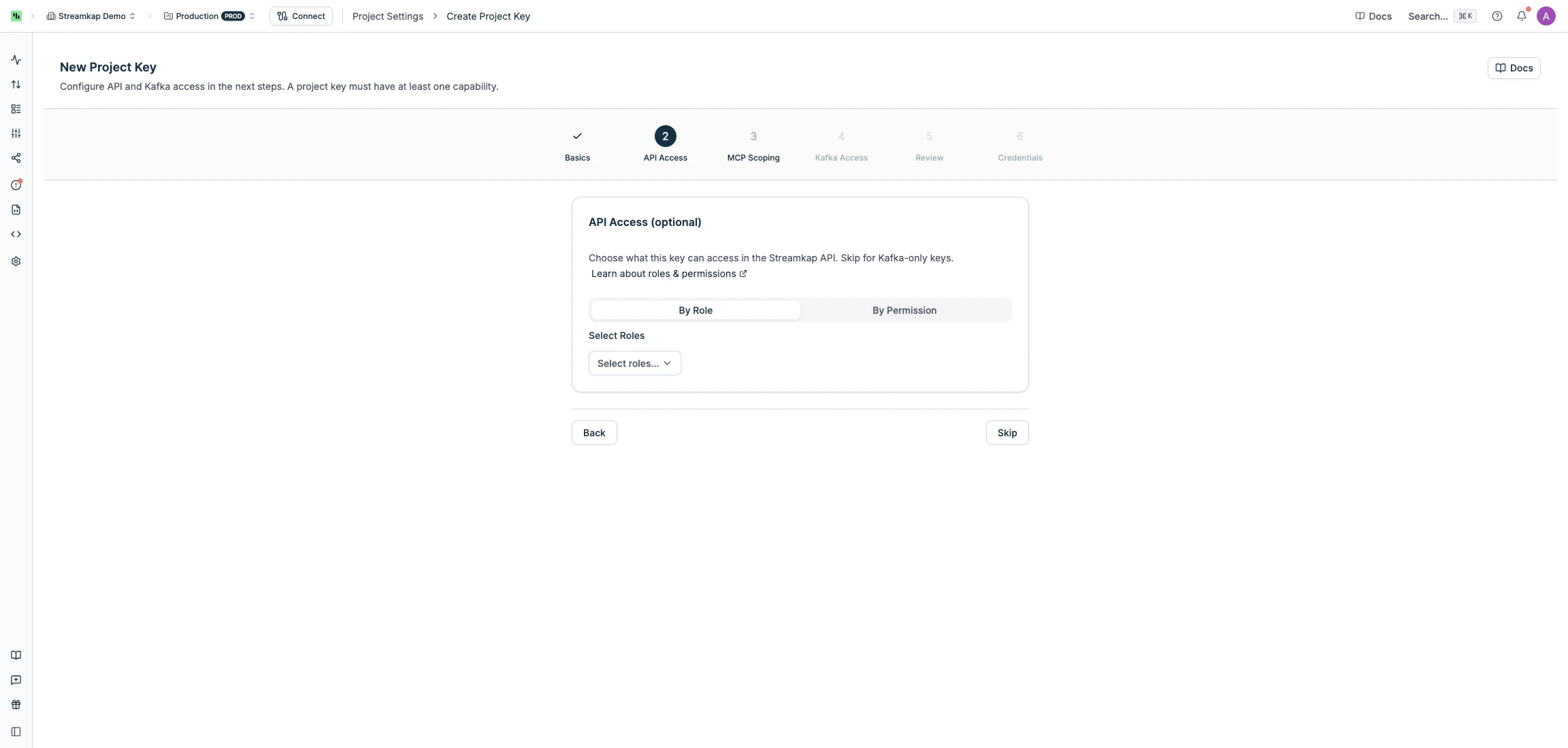

Step 2: API Access

Choose what this key can access in the Streamkap REST API. You can skip this step to create a Kafka-only key. Assign access using one of two modes:- By Role (recommended) — select one or more roles (e.g. Admin, Data Admin, Read Only). Roles can be changed after creation.

- By Permission — select individual permissions for fine-grained control.

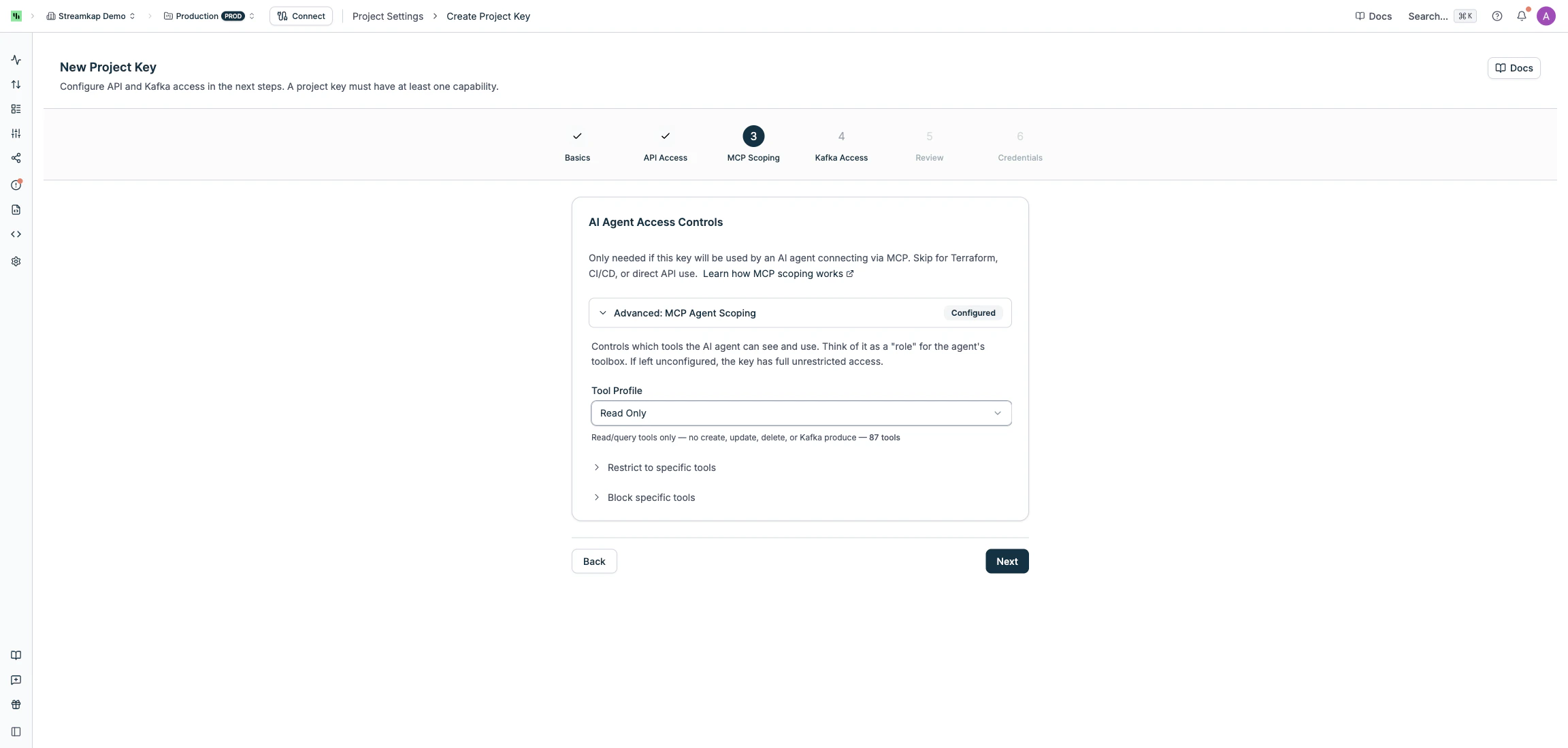

Step 3: MCP Scoping

If this key will be used by an AI agent via the MCP Server, you can restrict which tools the agent can call. Skip this step for CLI, Terraform, or direct API use. Choose a Tool Profile preset, or fine-tune access with allowed/blocked tool lists:| Profile | Description |

|---|---|

| Full | All tools — no restrictions |

| Read Only | Read/query tools only — no create, update, delete, or Kafka produce |

| Agent Operator | Read + safe operations (pause, resume, restart) — no create or delete |

| Infra Admin | Full infrastructure management — no direct Kafka data access |

- Allowed tools — only these tools are available (overrides profile)

- Blocked tools — remove specific tools from the profile

Tool scoping is enforced server-side. The credential file includes the scoping configuration for reference, but the MCP server always checks the authoritative settings stored in Streamkap — editing the file has no effect.

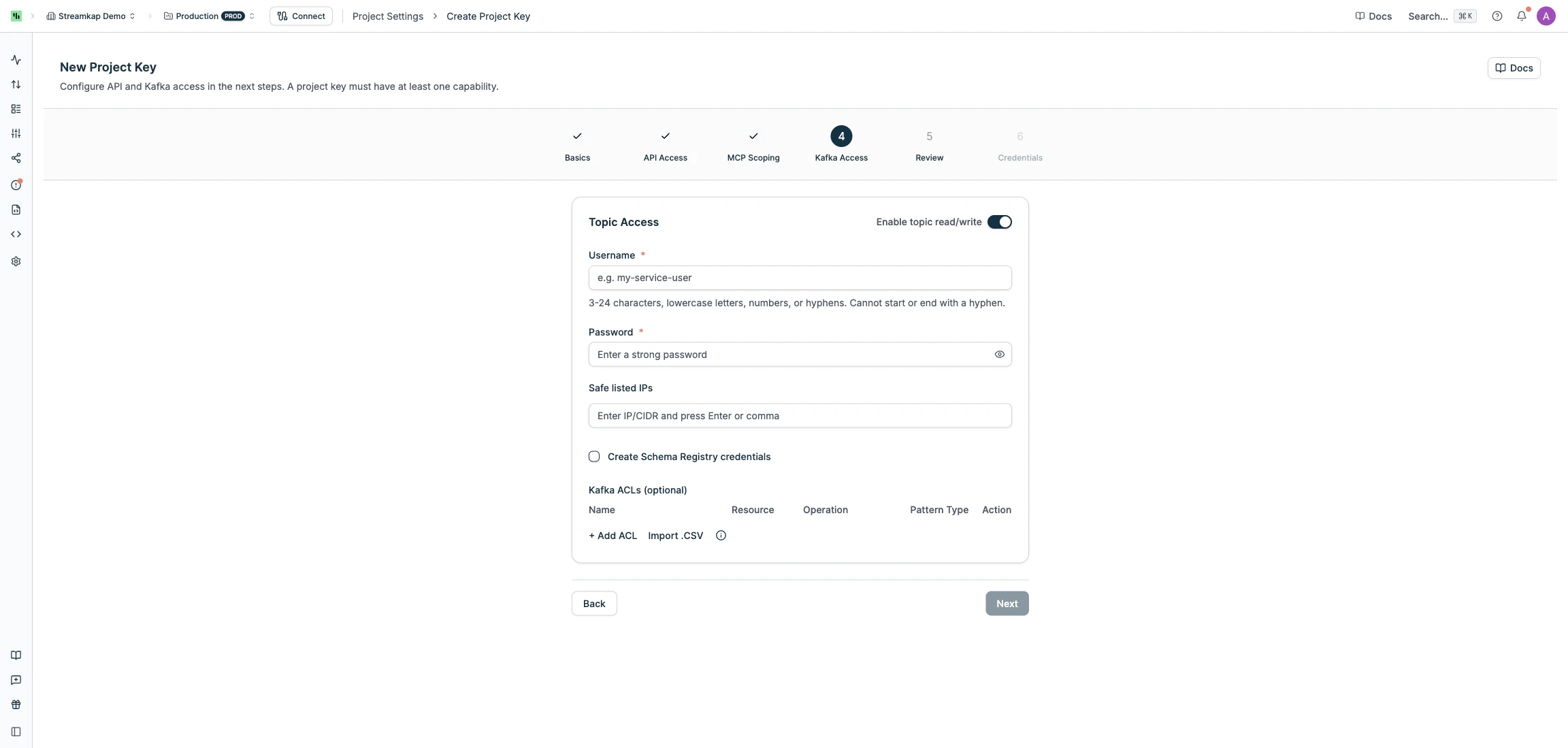

Step 4: Kafka Access

Toggle Enable topic read/write to create dedicated Kafka credentials alongside your key. This gives the key its own SASL username, proxy endpoints, and ACL rules. When enabled, configure:- Username — 3-24 characters, lowercase letters, numbers, or hyphens. Cannot start or end with a hyphen.

- Password — 12-128 characters with at least one uppercase letter, one lowercase letter, one digit, and one special character

- Safe listed IPs (optional) — restrict connections to specific IP addresses or CIDR ranges (e.g.

192.168.1.0/24) - Create Schema Registry credentials (optional) — generates additional credentials for schema management

- Kafka ACLs (optional) — define which topics and consumer groups this key can access. Click + Add ACL to add rules, or Import .CSV for bulk import.

- Name — the topic name, consumer group name, or prefix to match

- Resource —

TOPICorGROUP - Operation —

READ,WRITE,CREATE,DELETE,ALTER,DESCRIBE,ALL, etc. (GROUP resources supportREAD,DELETE, andDESCRIBE) - Pattern Type —

LITERAL(exact match) orPREFIXED(matches resources starting with the name)

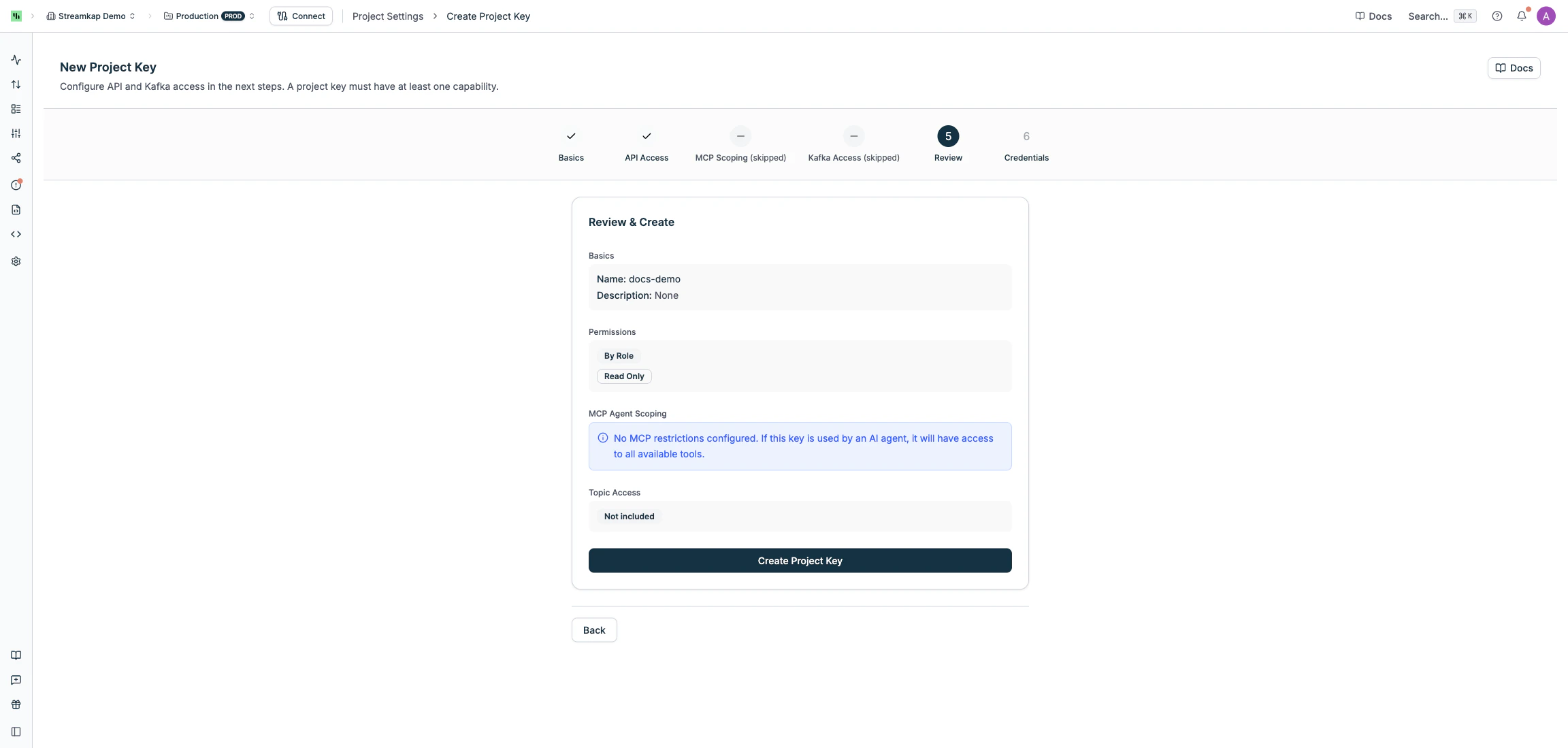

Step 5: Review & Create

Review your configuration and click Create.

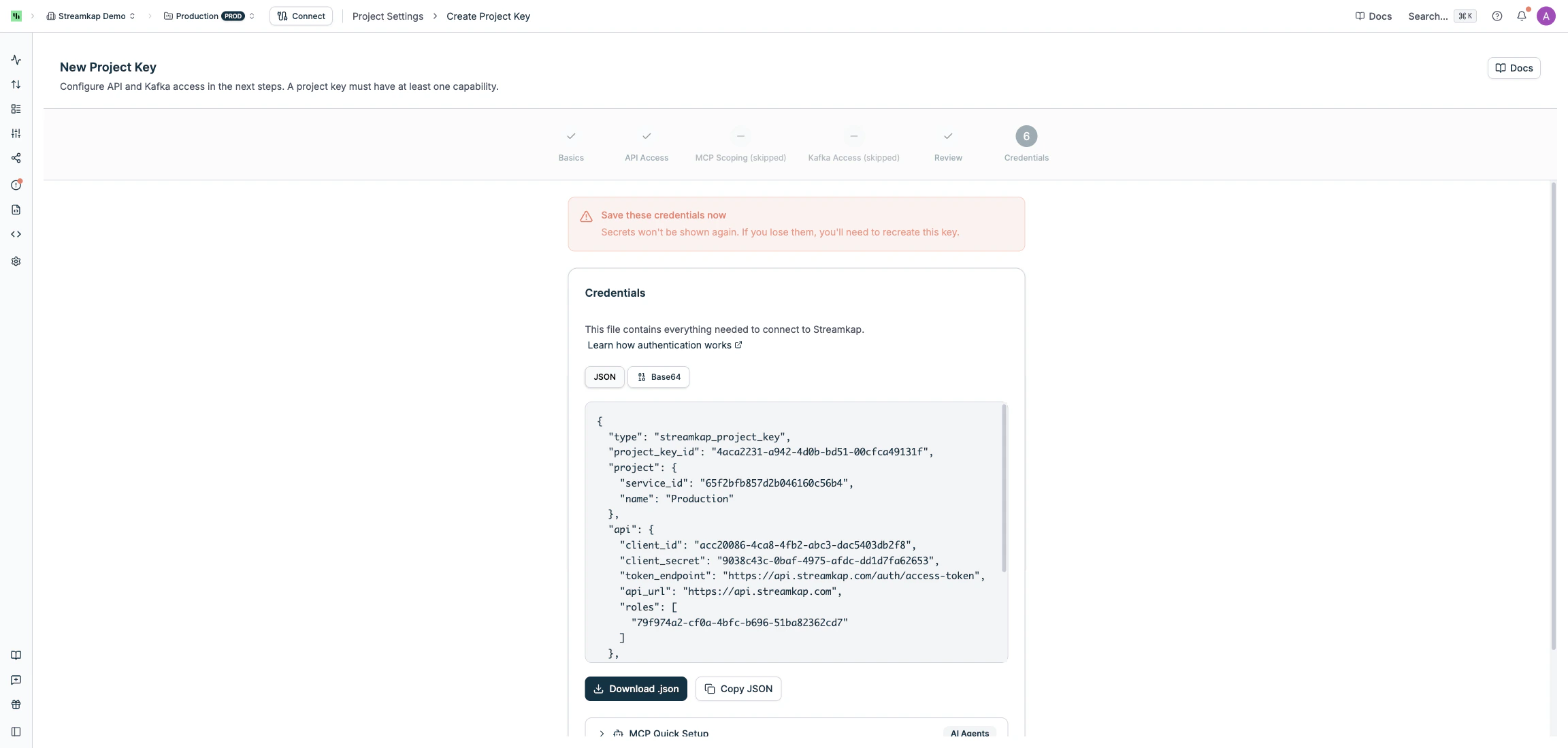

Step 6: Credentials

After creation, the credential file is displayed once. You can view it as JSON or Base64, and you must download or copy it before continuing.- Download .json — saves as

{key-name}-credentials.json - Copy JSON or Copy Base64 — copies to clipboard

Credential File Structure

The downloaded file contains everything needed to connect:api section is null for Kafka-only keys, and the kafka section is null for API-only keys.

Authentication

A Project Key with API access can authenticate with the Streamkap API in two ways.Option 1: Pass the entire credential file

Send the full credential file as theproject_key field to the Get Access Token endpoint. The file can be passed as Base64-encoded string or as a raw JSON object — both formats are accepted.

Option 2: Extract Client ID and Secret

You can extractapi.client_id and api.client_secret from the credential file and pass them as client_id / secret in the request body — the same fields used by standalone API tokens. This works with the interactive API Reference playground.

access_token and refresh_token. Use the access token as a Bearer token for all subsequent API calls.

Using Your Project Key

- REST API

- MCP Server

- CLI

- Kafka Consumer

- Terraform

Authenticate using the full credential file or extracted Client ID and Secret, then use the JWT to call any endpoint:You can also try this interactively in the API Reference playground using the extracted

client_id and secret.Manage Project Keys

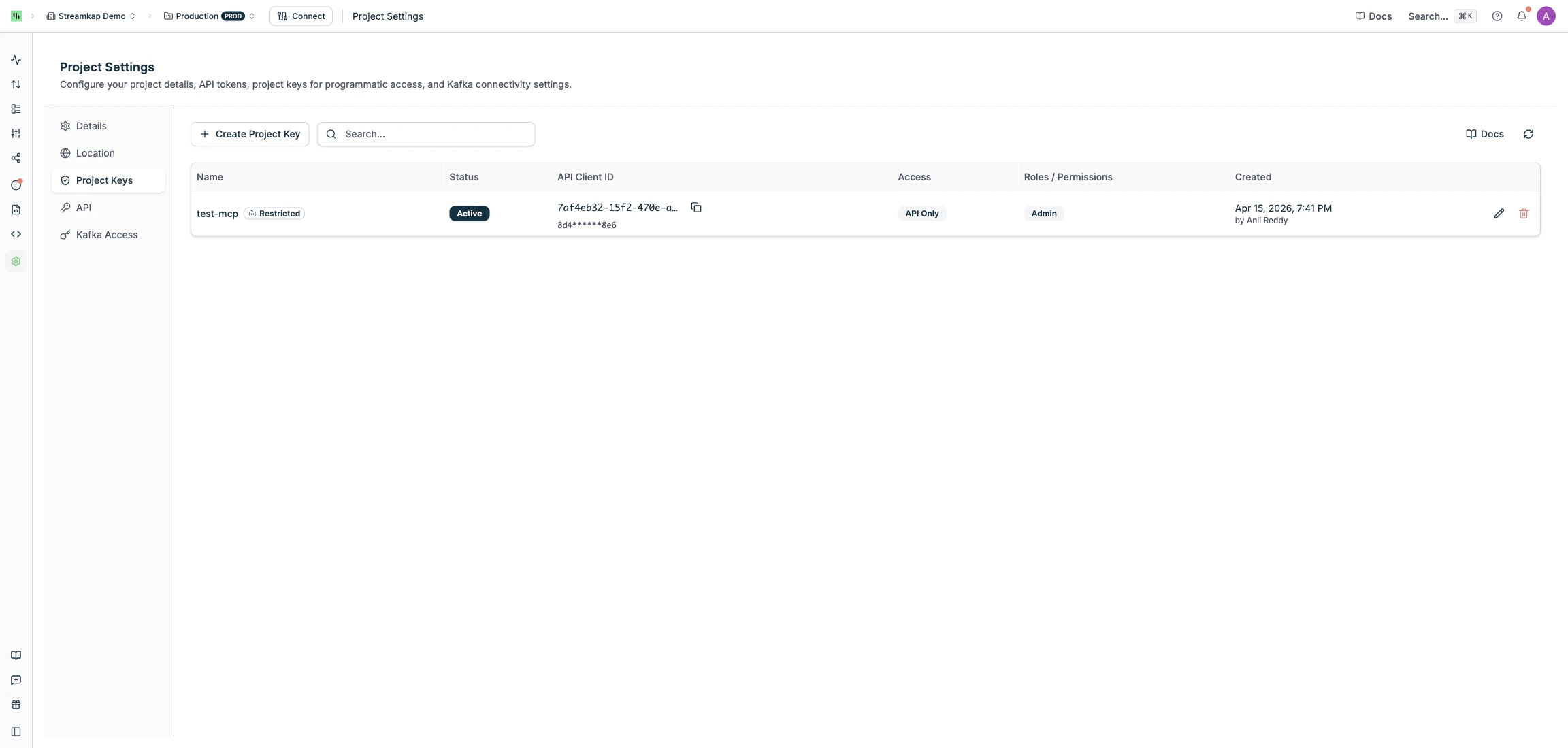

Viewing Keys

The Project Keys list shows all keys for the current project with their status, access type, roles, and creation date.

Key Status

| Status | Meaning |

|---|---|

| Active | Ready to use |

| Creating | Provisioning in progress |

| Failed | Creation failed — you can retry or delete |

| Deleting | Deletion in progress |

| Delete Failed | Deletion partially failed — retry the delete |

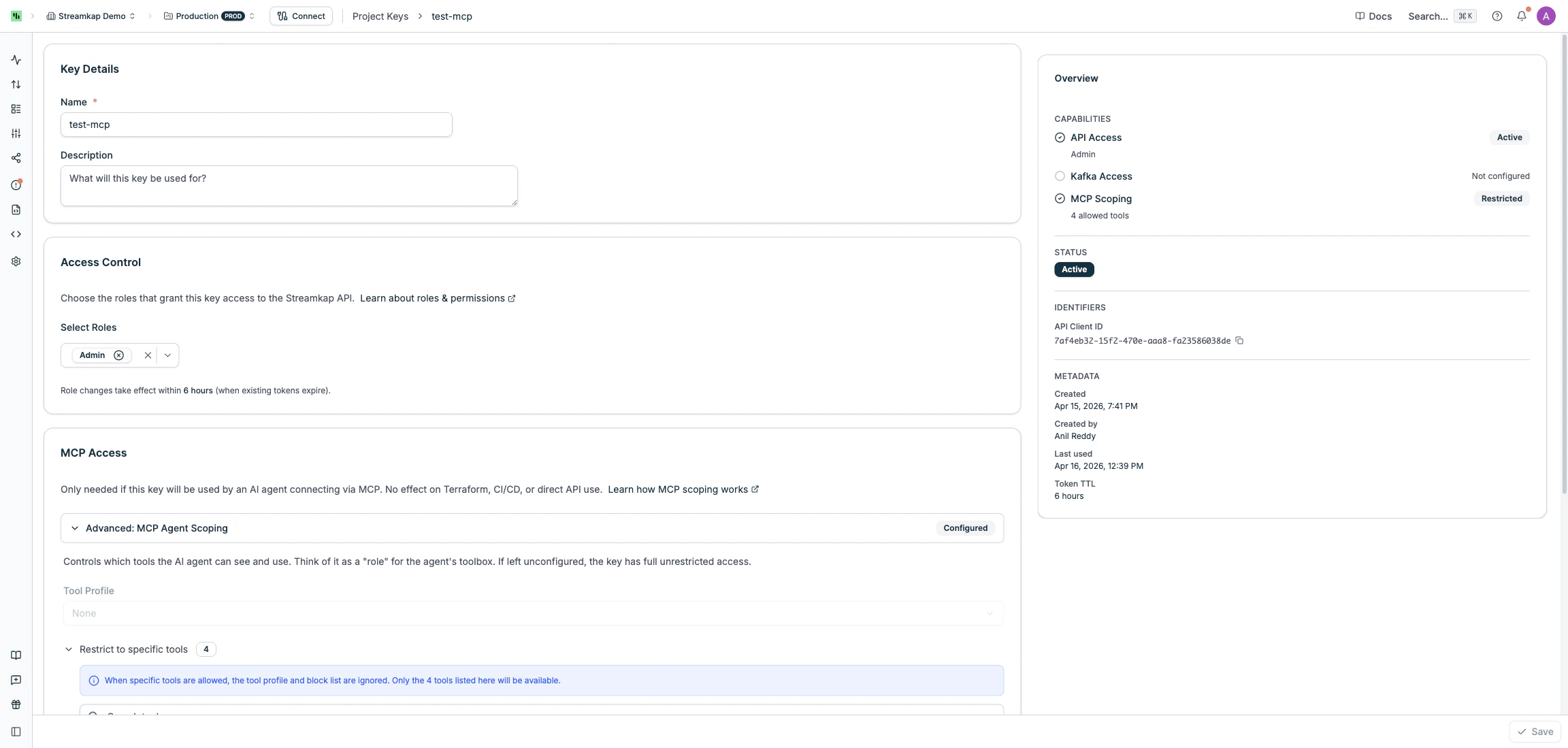

Editing a Key

Click the Edit button on any active key to change:- Name and Description

- Roles (if the key uses role-based API access)

- MCP tool scoping (profile, allowed tools, blocked tools)

- Kafka ACLs and Safe listed IPs (if the key has Kafka access)

Role changes take effect when existing access tokens expire (typically within a few hours). The key does not need to be recreated.

Adding Capabilities

You can add API or Kafka access to an existing key without recreating it:- Add Kafka to an API-only key — click Add Kafka Access on the edit page and configure username, password, and ACLs

- Add API to a Kafka-only key — click Add API Credentials and assign roles or permissions

Rotating Kafka Password

On the edit page for a key with Kafka access, use the Rotate Password option to set a new password. The new password takes effect immediately.Deleting a Key

Click the Delete button to permanently remove a key and all its associated resources (API token, Kafka user, proxy endpoints). This action cannot be undone. If deletion fails (e.g. due to a transient infrastructure issue), the key moves to Delete Failed status. Click Retry Delete to try again.Linked Resources

API tokens and Kafka users created by a Project Key are managed exclusively through the Project Key. They are protected from independent modification — edits and deletions must be done from the Project Key’s edit page.Access Types at a Glance

| Type | REST API | Kafka | MCP Scoping | Requirements |

|---|---|---|---|---|

| API Only | Yes | No | Optional | Any cloud provider |

| Kafka Only | No | Yes | No | AWS, dedicated namespace |

| API + Kafka | Yes | Yes | Optional | AWS, dedicated namespace |

Related

- MCP Server — connect AI agents to Streamkap

- CLI — command-line management tool

- Terraform Provider — infrastructure as code for Streamkap resources

- API Reference — full REST API documentation

- Team Management — manage roles and permissions